Rebirth Of The Metaverse, A Look Into The Future

When Mark Zuckerberg first unveiled his concept of the Metaverse, I was overwhelmed with excitement. However, I was left with mixed feelings once I saw the first versions of it.

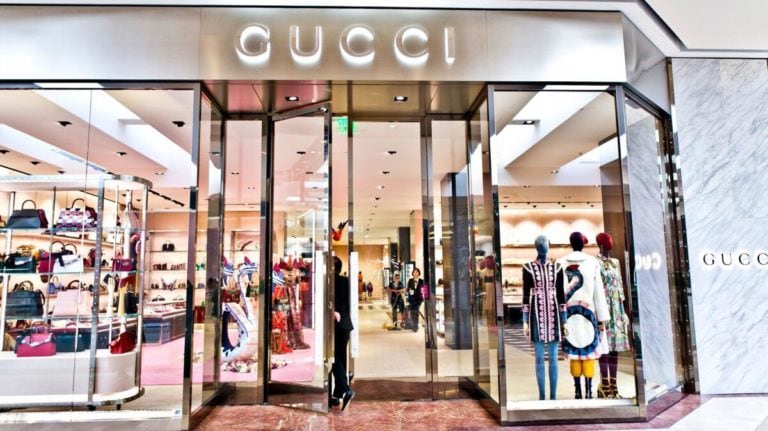

The cartoonish Nintendo Mii-like characters left me underwhelmed, to say the least. Perhaps the sci-fi and anime fan in me wanted so much more, and I thought, “Is this the best $177 billion dollars can achieve?” However, the optimist in me found it an acceptable first step and hoped to see more progress soon after.

Since then, I’ve been yearning to see more, but as time passed with no significant progress, I tucked it away as another potentially disappointing dream that may never reach my expectations within my own lifetime.

That was until I saw this video today. I’m blown away, and I am starting to think that that Matrix or Sword Art Online experience might be on the horizon.

If you are curious about how Mark’s cartoonish dream is evolving into a realm of ultra-realism. We’ll have to see it to believe it, so check out the video below and be prepared to get blown away.

Why We’re Talking Meta

Alright, do you remember back in October 2022 when Zuckerberg declared that Facebook was getting a name change? Yeah, it became Meta to align with the lofty goal of creating a metaverse. In this digital universe, people can interact and do business.

Zuckerberg was so keen on this that he said you wouldn’t need a Facebook account. Here’s the catch: the initial version was basically your video game fantasy come to life.

No one was buying it. It was as if the audience said, “Mark, we asked for Star Trek, not a Nintendo Mii character.”

By Q1 2022, Meta reported a whopping $4.28 billion loss, and it looked like the Metaverse was nothing but a costly daydream. But wait, the wind is changing direction. And this time, it’s not blowing smoke.

What’s New in Meta’s World?

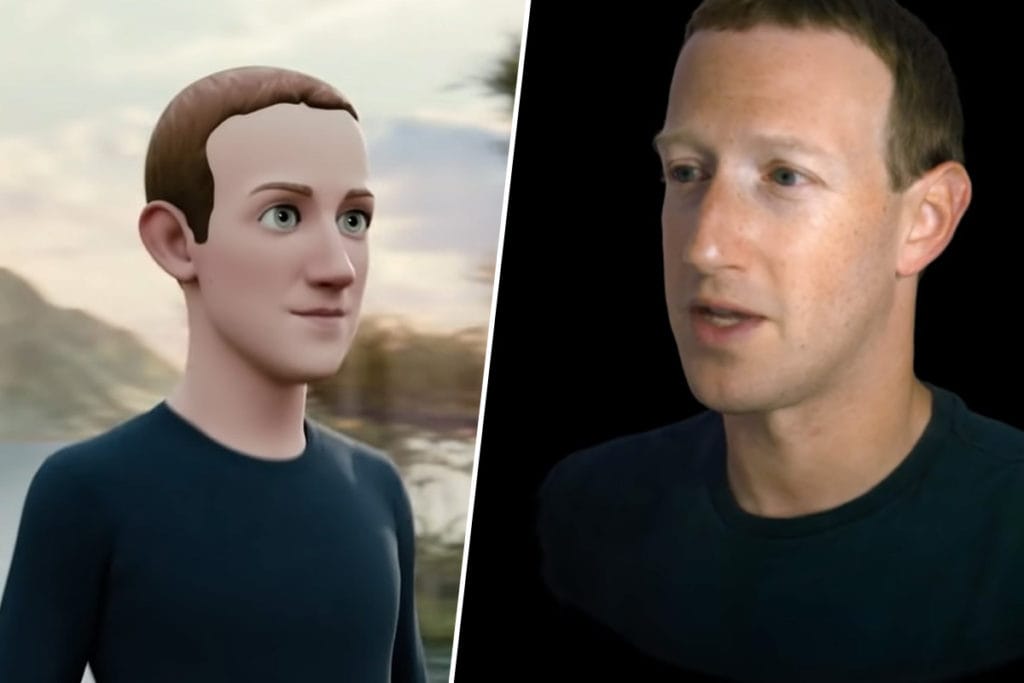

So, what’s the buzz about? Mark Zuckerberg and Lex Fridman, a computer scientist and podcaster, had a conversation in the Metaverse, and it’s nothing short of groundbreaking.

Think about this: They’re miles apart, but it looked like they were sitting across from each other at a cozy café. Mind-blowing. This isn’t some cheesy CGI trick; it’s the result of Pixel Codec Avatar research by Meta.

The initial public reaction was mostly apathy, to say the least. People probably thought, “This isn’t what we asked for. Is this the best you can do? Is this the best it will ever be?”

However, I wonder if they weren’t listening to the public as many had assumed. Knowing how technology works, I suspected valid technological challenges had to be overcome, so I tempered my expectations with reality and patiently waited.

But that long wait is over; now, the avatars in Meta are as lifelike as ever. Are they where I expect them to be? No, it still has a long way to go, but if this is a marathon, they’ve probably reached the first quarter of the run.

Unpacking the Pixel Codec Avatars Magic

1. Photorealistic Avatars

These aren’t your run-of-the-mill cartoonish avatars; we’re talking about doppelgängers so real they could make your mom double-take. The ‘Pixel Codec avatars were developed by its Reality Labs, and its fundamentals are actually reasonably easy to understand.

A 3D model of your face and numerous nuances of your facial expressions are made. Then that model is rigged so that when interacting in a virtual environment, a face-tracking camera inside the headset matches your facial expressions to the 3D model in real-time.

2. Powered by Machine Learning

Remember, this isn’t just cutting and pasting your face on a digital stick figure. The system collects extensive data on how your face moves when you laugh, frown, or look puzzled. It’s like your avatar goes to acting school specifically to mimic you.

What the machine learning part does is that over time, it can begin making inferences of how expressions might look, borrowing from its database of other references, and then can infer how your face would naturally react.

3. Efficient Data Usage

While not new or innovative is sending data rather than video to produce realistic virtual avatars. We do that today on a much smaller scale with cloud gaming, where inputs are passed on to a server to move a character in a game rather than controls.

However, cloud gaming’s fatal flaw today is that it feeds a video back to the player, which, due to the bandwidth, causes latency. However, this method sends world data both ways.

That implies that there is a potential for interactions with the virtual world that would return to us low-bandwidth data in real-time that is then processed locally.

It would be akin to having a collective virtual world on the cloud that synchs with local virtual worlds where each individual participating sees from their own perspective.

4. Still in the Works

This tech has been under the hood since 2019 and is still not in our eager little hands. But stay tuned; I’m sure we’ll be seeing plenty of more exciting things down the road.

It’s been nearly a decade since I was playing around with the Leap Motion product that used monochromatic IR cameras and three infrared LEDs to track intricate hand motions, overlaying virtual bones rigging over our hands.

And then there’s the Intel RealSense cameras, which created accurate textured and colored 3D models of people using a RealSense camera equipped with a hand-held device or RealSense webcam. Part of which was the same technology that powered Microsoft’s Xbox Kinect.

Integrating some of these technologies can take this progress to the next level. Hopefully, not too long from now, people will be able to do this from the comforts of their own homes, or they could go to a local service to get a digital version of themselves made professionally.

5. VR versus AR

While I think VR is the ultimate goal, the dream we are all waiting for, while aiming for the stars, we might neglect the potential to make small but significant advancements in AR.

Magic Leap, the unicorn company that had promised to bring AR to life, has been considered vaporware where its actual product never met the standards it claimed it could achieve.

I’d like to see more practical applications where a combination of mixed reality in conjunction with Pixel Codec avatars, Leap Motion technology, and RealSense technology can improve everyday productivity.

One thing is for certain, it will likely change business communications forever. Imagine having a global all-hands meeting where we can collaborate on a whiteboard, share documents, evaluate and interact with real-world objects, and so on, all in real time.

AR and mixed reality offers real practical application for business productivity that we could all use today and would likely have a higher adoption rate to help people transition into a VR future.

Key Considerations

If you’re already jotting down your Pixel Codec Avatars’ debut outfit, hold your horses. This tech is still in development. We also need to find out how compatible it’ll be with other VR systems or how much of a dent it’ll put in your wallet. So, get excited, but don’t break up with your 2D Skype sessions just yet.

As fascinating as this digital frontier is, keep a few things in mind:

Uncanny Valley

Many thought the realism would be too creepy, crossing into the so-called “uncanny valley” where things look almost human but not quite. Yet, the response has largely been positive, proving that the tech might be better than we feared.

Accessibility

You’d have to travel to Pittsburgh and spend hours being scanned. If this is going to be the future, it must be accessible. Good news: Zuckerberg is already thinking about a mobile solution.

Ethics

During their discussion, they made a good point about handling ethics. As Zuckerberg mentioned, it’s not uncommon for people to join a lobby and begin shooting each other, an acceptable and common interaction within many game lobbies.

But will people be able to separate virtual reality from reality? When it is too real and too visceral, how will it affect our cognitive interpretations of that? Will we be left with hidden psychological scars in our subconscious?

What about addiction? Will some people begin to opt to spend the rest of their lives living in virtual reality like in the Bruce Willis film “Surrogates,” where the whole of society preferred being jacked living their perfect invincible surrogate lives over actually living physically in the real world?

All of these are tough questions that are still to be examined and answered, but as you might expect, many of these answers can already be gleaned from what we’ve learned from science fiction. They might not be real, but they certainly present plausible outcomes.

What’s Next?

Sure, this is groundbreaking, but what’s next? Imagine conducting business meetings, attending concerts, or even having a dinner date in the Metaverse.

Zuckerberg hints at experiences “where you’re physically together,” expanding beyond simple video calls.

The technology will likely evolve to include full-body scans and quicker processes, making it a potential game-changer in how we interact online.

I’m already excited but what else is out there? Meta has also announced their next-generation Quest 3 headset.

So, if you’re a tech junkie always looking for the next hit, keep your eyes peeled for that.

A Quick Wrap-Up

We’re on the cusp of making our virtual hangouts as realistic and expressive as catching up at your local café. With technologies like Pixel Codec Avatars pushing the boundaries, who knows, maybe the next time you log in, you’ll find your digital self making better facial expressions than you do in real life! Keep watching this space; the future is dialing in and wants to do more than just video calls.

Frequently Asked Questions

What metaverse really means?

The metaverse is a collective, virtual shared space created by the convergence of physical and digital worlds, often accessible through technologies like virtual reality (VR) and augmented reality (AR). It serves as a digital universe where users can interact, socialize, and even conduct business.

How do I get into the Metaverse?

To enter the metaverse, you generally need a computer or a specialized headset for a more immersive experience. Once you’ve chosen a platform—like Roblox, Decentraland, or VRChat—you’ll create an avatar, which serves as your digital representation, and then you can explore, interact, and participate in activities.

Who owns the Metaverse?

Ownership of the metaverse is a complex issue, as it is often a collection of interconnected digital spaces. Some parts are owned by large corporations like Meta Platforms (formerly Facebook), while others are decentralized and governed by communities. The concept of ownership can vary depending on the specific platform’s rules.

What is the metaverse and how does it work?

The metaverse is a digital ecosystem where users, represented by avatars, can interact in real-time within a three-dimensional space. It combines elements of social media, online gaming, and virtual reality. Users navigate through different “worlds,” participate in activities, and engage in social or economic transactions.